I once handed a student self-assessment to a class of 30, got 30 responses back, and every single one of them rated their participation as “Excellent.”

Not most. All thirty.

I’d watched several of those students stare at the floor for the entire semester. One had missed six sessions. And yet: Excellent.

I didn’t have a motivation problem or an honesty problem. I had a design problem. The rubric was vague, the stakes were unclear, and students did exactly what people do when a task has no teeth: they optimized for the path of least resistance.

This guide is about building self-assessments that don’t have that problem.

This guide is for:

- Classroom teachers who’ve assigned self-assessments and gotten a pile of “I did great” in return

- College instructors wondering if self-evaluation is even worth the time

- L&D professionals adapting self-assessment methods for employee training and upskilling

- Curriculum designers building formative assessment systems that scale

- Department heads who need accountability tools that don’t require constant instructor babysitting

What Is Student Self-Assessment?

It is not a grading tool. It is a metacognitive practice.

That distinction is doing a lot of heavy lifting, and I’ll come back to it. For now, the short version: when you frame self-assessment as grading, students optimize for the grade. When you frame it as a diagnosis, they optimize for accuracy.

The entire architecture of a working system depends on which framing you lead with.

Done right, self-assessment builds self-awareness, supports personalized learning paths, and prepares students for the professional self-evaluation they will face in every job they hold. Done carelessly, it produces a stack of optimistic lies and a mild existential crisis for whoever reads them.

The gap between those two outcomes is mostly design.

Now, before we get to the solutions, I want to spend some time on the problem. Because the reasons self-assessments fail are specific, and if you don’t understand them, you’ll keep building rubrics that look fine on paper and produce nonsense in practice.

How Do I Build a Student Self-Assessment System That Actually Works?

Methods matter, but sequence matters more. Here’s the order I’d follow, and why skipping any of these steps tends to produce the exact data problems we covered above.

Step 1: Train Before You Assess

Students who’ve never self-assessed before will default to their gut. Their gut is usually wrong.

Before anything high-stakes, run two low-stakes practice rounds using anonymized sample work. Have students rate it against the rubric, then compare their ratings to yours and discuss the gap. That calibration is what makes every future assessment more accurate.

Step 2: Explain Why You’re Doing This

When self-assessments appear without context, students assume the worst. Spend two minutes explaining what you’ll do with the results. “I use these to group you for next week’s workshop” changes everything. Students who believe the data matters become more honest reporters.

Step 3: Reserve the Right to Amend

Tell students upfront: if their self-rating and your observation are more than one level apart, you’ll have a brief conversation about it. That’s it. The possibility of that conversation is usually enough to prompt accurate self-reporting.

Step 4: Connect Results to What Happens Next

This is where the whole system either earns trust or loses it. Use the data visibly. “Group A will focus on argument structure, Group B on source integration” turns a reflection exercise into something with real stakes.

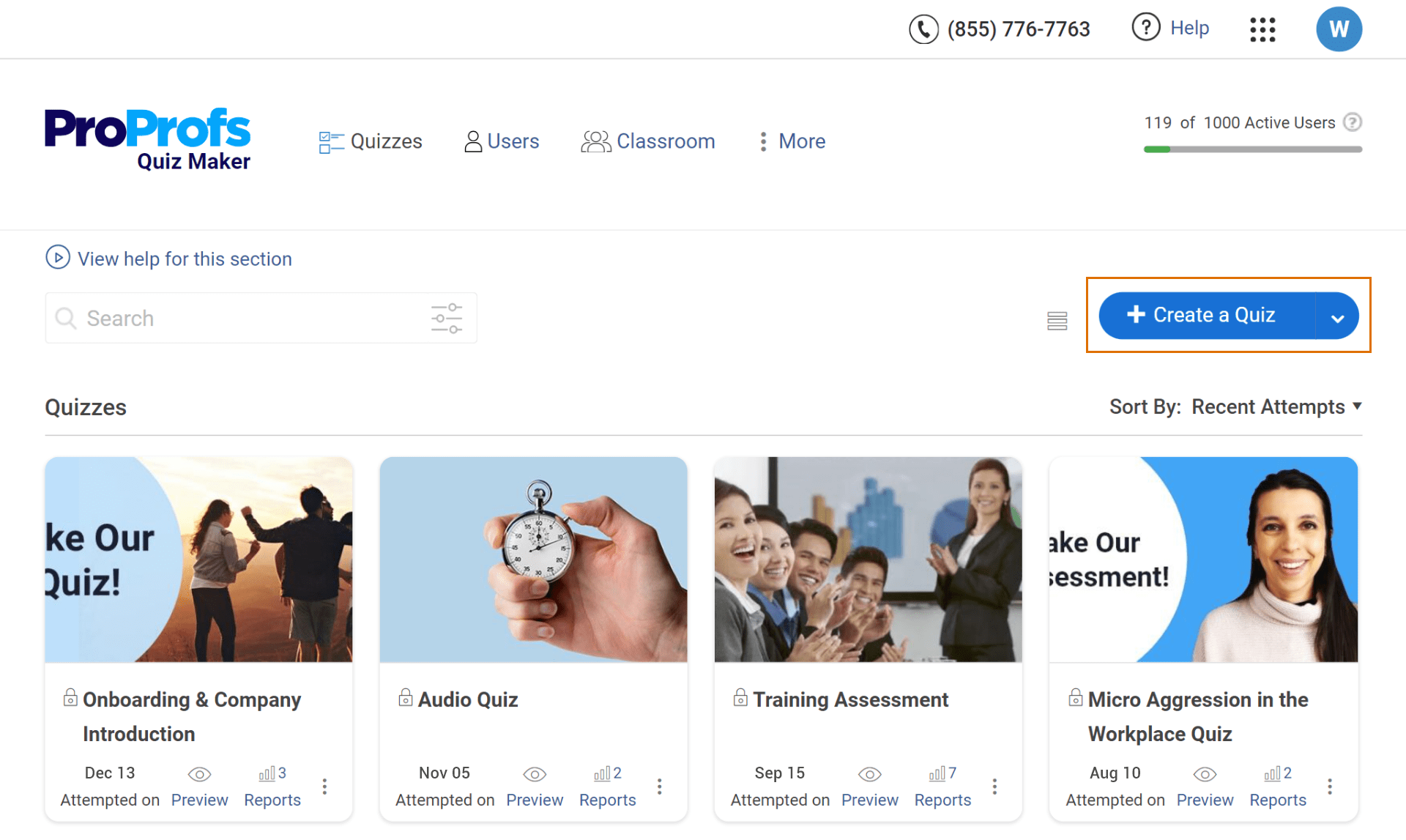

For digital assessments, I use ProProfs Quiz Maker to build and distribute the quiz, then let the scoring and tracking run automatically. Here’s how to set one up:

Using AI:

1. Click “Create a Quiz,” then select “Create with ProProfs AI“. You can try for yourself:

Let ProProfs AI Build a Quiz

2. Enter your topic, format, difficulty, and number of questions

3. Review the generated questions, keep what’s useful, cut what isn’t

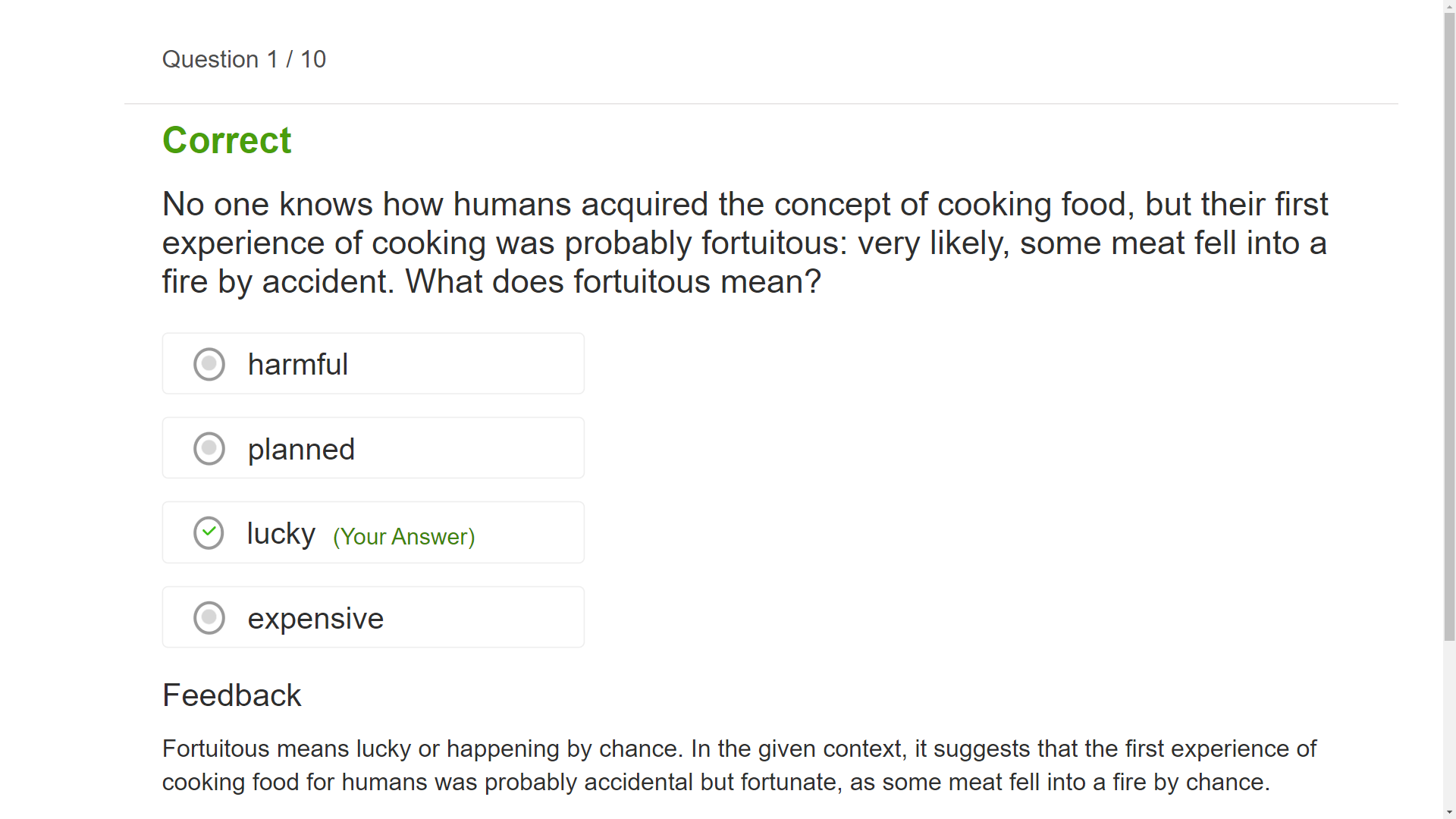

4. Configure scoring and instant per-question feedback

Watch: How to Automate Quiz Scoring & Grading

Using a template:

1. Click “Create a Quiz” and browse the template library

2. You’ll be directed to the templates page, where you can choose between ‘scored templates,’ ‘personality templates,’ and ‘ready-to-use quizzes.’

3. Select the desired template type and click “Use this Template”. Enter your name and proceed. This will redirect you to the page where you can set the desired quiz settings.

Watch: How to Create a Quiz Using Question Bank & Templates

From scratch:

1. Click “Create a Quiz,” then “Create Your Own”

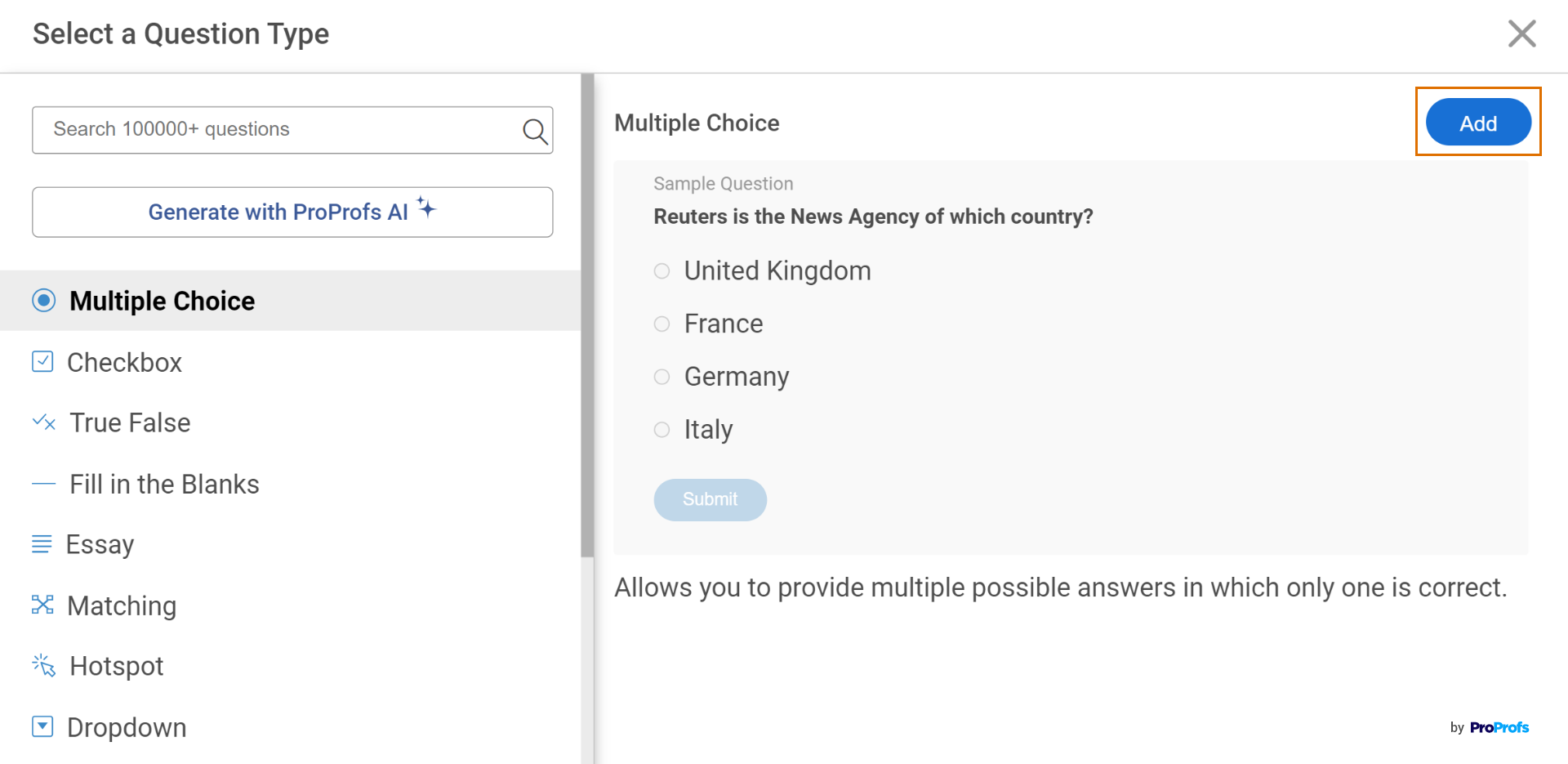

2. Build questions using 20+ question types. You can create your quiz with a single question type, such as MCQ, or have a mix of question types to make your quiz more interesting and fun.

Watch: 20+ Question Types for Online Learning & Assessment

3. Set up partial grading, question pooling, and anti-cheating settings

4. Add instant feedback per question

5. Once you’re ready with the assessments, you can distribute them using different methods:

- Share a link through email or any other platform

- Embed the assessment on your website or blog

- Print the quiz

- Share the QR code for the quiz

Watch this quick video guide to learn about quiz sharing in ProProfs Quiz Maker.

Step 5: Use Exit Tickets for Daily Touchpoints

Full rubric assessments aren’t daily tools. But quick reflections are. Ask one question at the end of class:

- “What are you confident about today, and what are you still fuzzy on?”

- “What would you explain differently if you had to teach this to someone else?”

One question. Two minutes. Done consistently, it builds the self-evaluation habit you actually want.

What Types of Student Self-Assessment Should I Use?

Online Quizzes

Online quizzes are the highest-validity format for knowledge-based self-assessment because they produce objective data that’s harder to game. A student can’t overrate their recall if the quiz shows them what they actually remembered.

The reason I use an online quiz builder for knowledge-based self-assessment instead of a paper form is the same reason I moved away from vague rubrics. It removes the space where optimism quietly replaces accuracy.

Tools like ProProfs Quiz Maker let educators build scored quizzes with instant feedback, question pooling, and anti-cheating settings, then share via link or QR code.

The real advantage is aggregate data: score trends, attempt frequency, and skill mastery levels across an entire cohort, not a stack of self-reported feelings. That’s the kind of learning analytics that actually informs instruction.

Rubrics and Checklists

Rubrics work best when the criteria are observable and specific. Checklists work for process compliance: “Did I cite all sources? Did I address each part of the prompt? Did I revise based on feedback?”

Neither works when the criteria are vague enough that two students reading the same descriptor would interpret it differently.

Portfolios

Portfolios are longitudinal self-assessment. Students compile selected work over time and reflect on what it reveals about their growth. The metacognitive demand is higher, and the time investment is significant. They’re best used when you want students to construct a narrative about their own learning, not just report a snapshot.

Learning Logs

Learning logs are low-cost, high-frequency. A student who records three observations after every class ends the semester with a detailed account of their own learning process. The challenge is consistency: logs only work when they become habitual, and habits require structure from the instructor, not just good intentions from the student.

Peer Assessment

Peer assessment can complement self-assessment well, but only when used carefully. Done right, it grounds a student’s self-perception in external reality before they finalize their own rating. Done carelessly, it produces the pact dynamic: everyone rates everyone highly, no one is accountable, and the data is noise.

If you use it, anonymize responses where culturally appropriate, and give students specific criteria, not open-ended impressions.

Self-Reflection Activities

Various self-reflection activities, such as writing prompts, guided questions, or video diaries, can help students reflect on their learning experiences. These activities prompt students to identify their strengths, weaknesses, challenges, and strategies that have been effective in their learning process. Self-reflection activities foster metacognitive skills and promote self-awareness.

Teachers can also incorporate video response questions into self-reflection activities, allowing students to articulate their thoughts and feelings visually and verbally, further enhancing their introspection and self-expression skills.

Learning Inventories

Learning inventories assist students in evaluating their preferred learning styles, study habits, and strategies. By understanding their learning preferences, students can optimize their study routines, engage in activities that suit them best, and make the most of their learning experiences. This method enhances self-awareness and promotes effective learning.

Learning Reflection Conferences

Learning reflection conferences involve students having one-on-one discussions with their teachers to discuss their progress, challenges, and future growth. These conferences provide an opportunity for students to reflect on their learning journey, seek feedback, and align their goals with their teachers’ guidance. It encourages students to take an active role in their education and strengthens their relationship with their teacher.

Why Does Student Self-Assessment So Often Go Wrong?

I’ve spent a lot of time in teacher forums and academic communities, reading educators describe the same frustration in different words. The honest takeaways are not flattering.

The Honesty Gap Is Real, and It’s Not What You Think

The easy explanation is that students lie because they want better grades. And sure, that happens.

But the fuller picture is more interesting and more fixable.

Some students genuinely don’t know how good or bad their work is. They don’t have an accurate internal benchmark, so they default to optimism. Others come from educational cultures where admitting struggle is associated with shame, and no rubric in the world will override that in a group setting.

And then there are students who understand the task perfectly and fill in “Excellent” anyway because they’ve learned, from years of experience, that nothing bad happens when they do.

All three of those are different problems. They require different solutions. Lumping them together as “students are dishonest” is where most self-assessment systems go wrong before they’ve even started.

The Dunning-Kruger Effect Shows Up in Classrooms

Here’s the part that stings: the weakest students tend to rate themselves the highest, and the strongest students tend to underrate themselves.

This isn’t a character flaw. It’s a well-documented cognitive pattern. The skill to accurately self-assess a performance is closely related to the skill required to perform well in the first place. Students who lack the competency also lack the ability to recognize what competency looks like.

Which means your self-assessment data is systematically skewed in exactly the direction you don’t want it to be.

Students Can Smell Busywork, and They’re Usually Right

Graduate students on forums like r/oxforduni have said it plainly: many self-assessment exercises feel like institutional theater. A way for schools to appear student-centered without actually committing the resources to be so.

That cynicism is earned. Students have sat through years of reflection activities that disappeared into a filing cabinet the moment they handed them in. When the data visibly changes nothing, students stop treating the exercise as real.

The fix isn’t a lecture about the value of self-reflection. It’s a design change that makes the connection between assessment and outcome impossible to miss.

Understanding what breaks student self-assessment is useful. But it’s not enough on its own. The next section is where we get into what actually works, and why.

FREE. All Features. FOREVER!

Try our Forever FREE account with all premium features!

What Does Effective Student Self-Assessment Actually Look Like?

Three things separate a self-assessment that produces real insight from one that produces a participation trophy pile:

- Specific, observable criteria instead of abstract descriptors

- An evidence requirement instead of a feeling-based rating

- A clear, visible connection between results and what happens next

Let me walk through each.

The Evidence Rule: Require Proof, Not Ratings

This is the single highest-leverage change you can make to any self-assessment rubric. Replace rating scales with evidence prompts.

Don’t ask: “How would you rate your class participation? (1-5)”

Ask: “Write down two specific contributions you made to class discussion this week. If you can’t name two, what got in the way?”

The first question invites a feeling. The second requires a memory. Students who actually participated can answer it easily. Students who didn’t are quietly confronted with the reality of their own behavior, privately, without anyone needing to make a scene about it.

This is what researchers call the “Explain Your Thinking” approach, and it works because recall requires evidence. Ratings only require optimism.

Rubrics That Actually Describe Observable Behavior

The word “participation” is not a criterion. It’s a category. Effective self-assessment rubrics break vague categories into specific, observable behaviors where two different people reading the same descriptor would reach the same conclusion.

| Vague Criterion | Specific Criterion |

| “I participated actively” | “I contributed at least twice per session with a specific comment or question” |

| “I completed the assignment well” | “I addressed all three prompt components with supporting evidence from the reading” |

| “I understood the material” | “I can explain the main argument in my own words without checking my notes” |

The right column is harder to fake. That’s the point.

The Stars and Stairs Method

For daily, low-stakes reflection without rubric overhead, this format is simple, fast, and genuinely useful.

Students identify:

- One Star: a specific strength they demonstrated, with a concrete example

- One Stair: a single, concrete next step before the next session

The constraint of “one thing” forces prioritization. “I will try harder” is not a stair. “I will re-read chapter three and write down two questions I still have before Thursday” is. The difference is accountability, and it’s built into the format itself.

Good methods are necessary. But they’re not sufficient. What turns methods into a system is the implementation, and that’s where most teachers who care about this still stumble.

Can Self-Assessments Work for Employee Training and Upskilling?

The same principles that make classroom self-assessment effective also apply to corporate learning. In fact, the stakes are often higher. If an employee can’t accurately identify their own skill gaps, it doesn’t just slow down learning. It can lead to overconfidence in critical areas, posing real risks to the business.

In training and upskilling, self-assessment plays four important roles.

- First, training readiness assessment helps managers and L&D teams understand who needs basic training and who can move ahead. This avoids wasting time and keeps learning relevant.

- Second, competency-based self-assessment allows employees to rate themselves against a defined framework. This makes it easier for organizations to see where to focus their development efforts.

- Third, repeated assessments help track learner progress over time. This makes skill growth visible, which keeps employees motivated and gives managers a clearer view of performance.

- Fourth, self-assessment supports personalized learning paths. When combined with quiz data, it helps route employees through content based on their actual performance, not assumptions.

The foundation remains simple. Clear criteria, evidence-based evaluation, and a direct link between results and next steps. That’s what makes self-assessment work, whether in classrooms or in the workplace.

The Swagger That Comes From Actually Knowing

I started this guide with a stack of self-assessment forms, all rated “Excellent,” and a teacher quietly questioning everything.

Here’s what I’ve learned: student self-assessment works best when I build a system that is precise, transparent, and clearly tied to real outcomes so students actually care.

A student who walks into a room knowing exactly what they’ve mastered and exactly where they still have gaps doesn’t need to inflate their self-rating. They’re not guessing. They have evidence. That’s the earned confidence, the “swagger,” that comes from real self-knowledge rather than optimistic self-perception.

The work is in the design: specific criteria, evidence requirements, calibration training, and a visible next step after every assessment.

Start with one rubric. Make the criteria observable. Add an evidence prompt. Run it twice with low stakes. See what the data actually tells you.

That’s the whole first step. And it’s enough.

Frequently Asked Questions

What should I do after learners complete a self-assessment?

Use the results to change something visible. Group students by identified gaps, adjust the following week's content, or schedule a brief conversation with students whose self-ratings diverge significantly from observed performance. If nothing changes after the assessment, students learn quickly that it doesn't matter.

How do I ensure learners take self-assessments seriously?

Explain what you will do with the data before they fill it out, build in a calibration consequence for significant inaccuracy (not a punishment, but a conversation), and make the format specific enough that accurate responses require real thought. Vague rubrics invite vague responses; that's a design problem, not a motivation problem.

Can self-assessments be used for employee training and upskilling?

Yes, and the framework transfers directly. Pre-training readiness assessments, competency-based self-evaluation, and progress tracking through repeated assessments all work in corporate L&D. The key difference is that professional contexts usually require formalized scoring thresholds and completion tracking for compliance purposes.

What metrics should I track in self-assessment results?

Track score distribution across criteria (to identify which skills your cohort consistently overestimates), score change over repeated assessments (for growth measurement), attempt frequency (for habit formation), and the gap between self-ratings and instructor or quiz-based ratings (for calibration accuracy).

How do I create self-assessments quickly and effectively?

Start with a clear skill you want to measure, then write three to five observable behavioral indicators for it. Build the rubric around those indicators, not around abstract qualities. For digital delivery, quiz-builder platforms with AI generation and ready-to-use templates reduce creation time significantly while keeping scoring consistent.

How do I address the Dunning-Kruger effect in student self-assessments?

Run calibration exercises before high-stakes assessments: have students rate sample work against the rubric, compare their ratings to yours, and discuss the gap openly. That external reference point significantly reduces systematic overestimation by lower-performing students, because it gives them something concrete to measure against.

What is the difference between self-assessment and formal assessment?

Formal assessment measures performance against an external standard. Self-assessment builds the capacity to recognize that standard internally. The two are most powerful together: formal assessment provides the calibration point, and self-assessment develops the metacognitive skill to use that calibration without constant external input.

We'd love your feedback!

We'd love your feedback! Thanks for your feedback!

Thanks for your feedback!