Most educators still equate assessment with tests. A score goes into the gradebook, the class moves on, and the data is rarely used. I do not see that as an assessment. That is recordkeeping.

When I think about assessment, I think about a process. You are gathering evidence of what students understand, what they can actually do, and what they need next, and then using that evidence to adjust your instruction. The test is only one tool within that process.

When you use assessment well, it becomes one of the most powerful ways to improve learning outcomes. When you do not, it quickly turns into extra work for you and unnecessary pressure for your students.

In this guide, I will walk you through what assessment really is, why the research behind it matters, the different types you can use and when to use them, and the design principles that separate assessments that drive learning from those that simply measure it.

What Is Educational Assessment?

Educational assessment is the systematic process of collecting evidence of student learning to improve instruction and outcomes. It is not a single event; it is a continuous feedback loop that runs before, during, and after instruction.

According to the Institute of Education Sciences (IES, 2025), well-designed assessment answers three questions: What do students currently know? What can they demonstrate? And what do they need next?

That third question is the one most assessments skip entirely, which is why so many feel disconnected from actual learning.

Assessment for education is not about catching students out. It is about generating the evidence needed to move them forward.

Why Educational Assessment Is Necessary for Improving Student Learning Outcomes

Without assessment, instruction is undirected. There is no way to know whether students have already mastered what you are about to teach, or whether a gap from three units ago is quietly compounding. Assessment is what converts teaching intent into teaching evidence.

The evidence on this is consistent. Research from John Hattie’s meta-analysis of over 800 studies ranks feedback, which good assessment generates, among the highest-impact variables on student achievement. A randomized controlled trial of the ASSISTments platform found that students at or below the median who received immediate, targeted feedback on assignments gained the equivalent of nearly a full additional year of learning in math, measured at 0.18 standard deviations.

Assessment benefits all students. But it is most transformative for students who are struggling. Getting it right is an equity issue, not just a pedagogical preference.

The core reasons assessment drives improvement:

- It surfaces learning gaps early, when intervention is still low-cost

- It identifies each student’s Zone of Proximal Development, the point where instruction is most effective

- It gives teachers real data to differentiate instruction rather than teach to an imaginary average

- It creates shared accountability between teachers and learners

- It provides the longitudinal data institutions need to improve programs over time

What Are the Different Types of Educational Assessment?

The four core types of educational assessment are diagnostic (before instruction), formative (during instruction), summative (after instruction), and standardized (benchmarked against a norm or peer group). Most effective programs also use performance-based, adaptive, and ipsative assessments to capture the full range of what students actually know and can do.

Here is how each one works, and more importantly, when to use it.

1. Diagnostic Assessment: Start With What Students Already Know

Diagnostic assessment happens before instruction begins. Its job is to establish a baseline, what students already know, what gaps exist, and what misconceptions need addressing before they become bigger problems.

The most important shift in modern diagnostic practice is moving from deficit-focused to asset-based. Rather than cataloguing what students cannot do, effective diagnostics start by identifying what they can do, then mapping what comes next.

Why it matters: Without a baseline, you risk spending three days re-teaching material that half the class already mastered while the other half has a gap you never knew existed. Diagnostics make “just-in-time” instruction possible.

Examples:

- A pre-unit math quiz checking whether students have the prerequisite operations for what comes next

- A writing diagnostic identifying who needs foundational support before an argumentative writing unit begins

- A safety knowledge checklist is administered before compliance training starts

How to make it work:

- Keep it to 10 to 15 targeted questions, enough to reveal meaningful gaps, not so long it feels like a test

- Align every item to skills that directly affect the upcoming unit, not a broad review of everything

- Share results with students so they understand the purpose and feel ownership over their starting point

- Use the data to group learners and differentiate your approach before you begin

- Re-administer after instruction to quantify actual growth against the baseline

2. Formative Assessment: The Real-Time Compass

Formative assessment is a low-stakes, ongoing evaluation during instruction. It gives teachers and students real-time feedback while there is still time to act on it, not after the unit ends, but while learning is actively happening.

IES (2025) identifies formative assessment as one of the strongest evidence-based interventions for both elementary and secondary achievement. The reason it works is straightforward: it engages students in thinking about their own thinking, while simultaneously giving teachers the data to adjust before gaps calcify into failure.

Formative assessment is also the primary tool for identifying a student’s Zone of Proximal Development, the instructional sweet spot just beyond what a student can do independently but reachable with targeted support.

Examples:

- Exit tickets: one thing learned today, one question still remaining

- Weekly five-question quizzes with automated instant feedback, tools like ProProfs Quiz Maker allow you to configure per-answer feedback so students understand not just their score but where their reasoning went wrong

- The 3-2-1 format: three facts, two connections, one question

- Minute Papers and “Muddiest Point” exercises between lesson segments

- Live polls or scenario questions mid-lesson

How to make it work:

- Make it clear these are not graded; psychological safety is what makes students respond honestly

- Act on results the same day, not next week

- Give feedback that specifies what worked, what did not, and what the specific next step is

- Rotate formats to reach different learning preferences and keep engagement from dropping

A note on feedback literacy: One thing the research surfaces that most teachers feel but rarely say out loud, not all students know how to use feedback, even when it is good feedback. Some students do not read it. Some do not understand it. Some have been conditioned by years of grades without context to treat feedback as noise. This is not a reason to stop giving feedback. It is a reason to teach students explicitly how to use it: what to look for, what to do differently, and how to connect written feedback to their next attempt.

3. Summative Assessment: The Final Validation

Summative assessment evaluates learning at the end of a unit, term, or course. It provides a definitive measure of whether students met the learning objectives defined at the outset. It is typically graded.

Beyond recording individual achievement, summative data increasingly drives institutional decisions. Universities identify structural bottlenecks, modules where cohort performance persistently drops, and restructure course delivery based on aggregated summative outcomes. A newer pattern worth noting: “summative-formative” hybrids, where mock final exams are used as a formative tool weeks before the actual terminal assessment, giving students a chance to identify and close gaps before it counts.

Examples:

- End-of-unit tests or statewide exams

- Capstone projects, comprehensive exams, and final research papers

- Certification tests or practical skills demonstrations in training programs

How to make it work:

- Align every question to an objective that was explicitly taught; surprises on a summative assessment are not rigorous, they are unfairness

- Mix recall and application items; a test that only checks memorization misses reasoning skills entirely

- Compare final scores against diagnostic baselines to measure real growth, not just absolute performance

- Use cohort-level data to inform curriculum redesign for the next cycle

Diagnostic vs. Formative vs. Summative: Quick Comparison

| Feature | Diagnostic | Formative | Summative |

|---|---|---|---|

| Purpose | Establish baseline | Guide learning in real time | Certify end-of-unit mastery |

| Timing | Before instruction | Ongoing throughout | End of unit or course |

| Stakes | None | Low | High |

| Feedback | Informs planning | Immediate and actionable | Delayed and evaluative |

| Learner role | Shows starting point | Actively reflects and adjusts | Completes and moves on |

| Examples | Pre-tests, surveys | Exit tickets, polls, quizzes | Final exams, projects, certifications |

4. Standardized Assessment: The Common Benchmark

A standardized assessment is designed, administered, and scored identically for every test-taker. That consistency is what makes meaningful comparison possible across classrooms, schools, regions, and countries.

Standardized data like NAEP provides systemic visibility that no individual classroom assessment can offer. When only 28% of U.S. eighth graders reach math proficiency, as NAEP data currently shows, standardized assessment is the mechanism that makes that pattern visible and actionable at the policy level.

Examples:

- Statewide reading and math exams in K-12

- SAT, ACT, GRE for higher education entrance

- Industry-standard compliance or safety certification tests

How to make it work:

- Treat standardized scores as one data point among many, not the complete picture of a student

- Prepare students for both the content and the format; test anxiety and unfamiliarity with format are construct-irrelevant variables that suppress scores

- Analyze group-level trends, not just individual scores, to identify systemic strengths and gaps

5. Performance-Based Assessment: Demonstrating Real-World Readiness

Performance-based assessment requires learners to apply knowledge to complete a real task or solve a real problem. It measures what learners can actually do, not just what they can recall on a multiple-choice test.

Some of the most important competencies, communication, collaboration, critical thinking, and creative problem-solving, are nearly invisible in traditional test formats. Performance tasks make them legible. They also tend to be more engaging because the connection between effort and real-world relevance is explicit and immediate.

Examples:

- Designing and presenting a science experiment in K-12

- Engineering students building and defending a prototype

- Sales teams delivering a mock client pitch in professional training

How to make it work:

- Build rubrics defining success criteria for both process and product before the task begins, not after

- Use realistic scenarios that mirror challenges learners will actually face outside the classroom

- Build in self-review and peer feedback cycles to deepen reflection

- Document or record performances where possible so they can be reviewed thoroughly and fairly

Watch: How to Create a Video Interview Quiz

6. Adaptive Assessment: Personalized Precision

An adaptive assessment uses algorithms or AI to adjust question difficulty in real time based on how a learner responds. Answer correctly, and the next item is harder. Struggle, and the system leads to something more accessible. The goal is to pinpoint a learner’s true skill level without requiring a long, uniform test that bores high performers and demoralizes low ones.

Adaptive testing operationalizes the Zone of Proximal Development at scale. Research in 2025-26 shows AI-enhanced active learning environments can produce 54% higher test scores compared to passive delivery when implemented well.

A note on skepticism: Some students experience adaptive tests as unfair. Not everyone gets the same questions, which creates a perception problem even when the methodology is sound. Before deploying adaptive assessment in any high-stakes context, explain how it works. Students who understand the logic tend to accept it. Students who encounter it without explanation often feel cheated.

Examples:

- Computer-adaptive reading programs adjust difficulty per question

- Adaptive math assessments that home in on specific error patterns

- Personalized compliance modules that skip content a learner already demonstrably knows

How to make it work:

- Explain how adaptive testing works before it starts

- Pair with fixed-question components in high-stakes contexts to address equity concerns

- Audit system accuracy periodically to confirm difficulty adjustments are calibrated correctly

- Test all platforms with screen readers and assistive technology before deployment

7. Authentic and Alternative Assessment: Beyond the Test

Authentic assessments ask students to demonstrate knowledge through real-world tasks. Alternative assessments use any format outside traditional testing, portfolios, exhibitions, debates, or live demonstrations.

These formats make accessible the competencies that matter most for life after school, and they tend to connect classroom learning to the world students will actually inhabit.

Examples:

- Student portfolios documenting growth over a semester

- Case study analysis or field research projects in higher education

- Client simulations or project pitches in professional training

How to make it work:

- Align every task to a specific learning objective, not just general capability

- Create detailed rubrics before the task launches so scoring is consistent across evaluators

- Offer choice in how students demonstrate mastery, multiple valid formats increase equity and engagement

- Ask students to explain their process, not just show their product; the explanation reveals depth of understanding

8. Criterion-Referenced, Norm-Referenced, and Ipsative Assessment

These frameworks determine how results are interpreted, and they change what a score actually means.

Criterion-referenced: Performance is measured against a fixed standard (for example, “must score 75% to pass”). Best for certification, compliance, and mastery verification. Examples include driving tests, chapter exams, and industry certifications.

Norm-referenced: Performance is compared to a peer group (for example, national percentile). Best for selection, placement, and identifying relative performance. Examples include the SAT, GRE, and IQ assessments.

Ipsative: Performance is compared to the learner’s own previous results. Best for motivation, goal-setting, and growth tracking , particularly valuable for students who may not rank highly against peers but are making significant personal progress.

| Criterion-Referenced | Norm-Referenced | Ipsative | |

|---|---|---|---|

| Compares to | Fixed standard | Peer group | Self (prior performance) |

| Best for | Mastery, certification | Ranking, selection | Growth tracking, motivation |

| Score means | Met or did not meet standard | Percentile vs. group | Improved, stayed same, or declined |

| Example | 80% required to pass unit exam | SAT national percentile | Draft 1 vs. Draft 3 of an essay |

Assessment Strategy Map

Use this matrix to match your instructional goal to the right assessment type.

| Goal | Best Assessment Type | Example Formats | Data You Get | What to Do Next |

|---|---|---|---|---|

| Identify prior knowledge | Diagnostic | Pre-test, entry survey, skill checklist | Baseline gaps and strengths | Group learners, adjust lesson scope |

| Monitor progress mid-unit | Formative | Exit tickets, quizzes, polls, peer review | Real-time gap identification | Reteach, offer targeted support |

| Certify end-of-course mastery | Summative | Final exam, capstone project, presentation | Achievement vs. objectives | Advance, remediate, or certify |

| Compare across schools or cohorts | Standardized | State exam, industry certification | Benchmarked performance data | Identify systemic gaps, inform policy |

| Evaluate real-world readiness | Performance-based | Simulation, pitch, portfolio | Applied skill demonstration | Recommend for next level or role |

| Personalize difficulty in real time | Adaptive | Computer-adaptive test | Precise skill level estimate | Route learner to appropriate content |

| Motivate through personal growth | Ipsative | Pre/post quiz comparison, draft portfolio | Individual progress over time | Set next personal learning target |

Once you know which type fits your goal, the next question is how to make it rigorous. Here is what separates a good assessment from one that produces misleading data

What Makes an Educational Assessment “Good”?

A good assessment satisfies three standards: validity, reliability, and fairness. Without all three, the data you collect cannot support sound instructional decisions. Here is what each one means and how to protect it.

Validity: Does It Measure What You Intend?

Validity is not a property of the test itself. It is a property of the interpretations made from the scores. An assessment is valid when scores actually reflect the skill or knowledge they claim to measure, and nothing else.

Constructive Alignment, the framework proposed by John Biggs, provides the practical test: objectives, instruction, and assessment must all point at the same learning outcome. If a science test accidentally measures reading fluency rather than understanding of photosynthesis, the science score is invalid, even though it looks like a number.

Threats to validity: Construct-irrelevant variance, measuring something other than the intended skill, like testing language proficiency during a math assessment, or rewarding answer length in an automated essay scoring system.

How to protect it:

- Map every item to a specific, measurable learning objective before writing the test

- Choose formats that match the skill, simulations for applied technical ability, essays for reasoning, and performance tasks for demonstration

- Pilot with a small group first to confirm questions work as intended

- Have a second reviewer check for alignment and clarity

Reliability: Does It Produce Consistent Results?

Reliability is the consistency of measurement across time, conditions, and graders. An assessment is reliable if a student who takes it twice under similar conditions gets similar scores, and if two different graders marking the same response reach similar conclusions.

Threats to reliability: Subjective grading bias, inconsistent test conditions, ambiguous questions, and variable rubric application.

How to protect it:

- Use standardized rubrics and apply them consistently across all test-takers

- Leverage automated grading for objective items to eliminate inter-rater inconsistency

- Remove or rewrite ambiguous questions that can be interpreted multiple ways

- Train all human graders together before marking begins

Fairness: Does Everyone Get an Equitable Opportunity?

Fairness means removing construct-irrelevant barriers that prevent diverse learners from demonstrating their actual knowledge. It is distinct from making the test easy. It means ensuring that performance reflects learning, not circumstance.

Modern practice uses Universal Design for Assessment (UDA) to build fairness in from the start, and Differential Item Functioning (DIF) analysis to detect after the fact whether specific items disadvantage particular subgroups.

Threats to fairness: Cultural bias in examples, linguistic complexity unrelated to the content being assessed, inaccessible formatting, and time pressure that measures anxiety rather than knowledge.

How to protect it:

- Apply the MAP 2.0 Framework (covered in the Fairness Playbook below)

- Provide accommodations proactively, not reactively

- Use AI to audit draft questions for cultural and linguistic assumptions

- Test all platforms with screen readers and assistive technologies before deployment

FREE. All Features. FOREVER!

Try our Forever FREE account with all premium features!

Assessment Design Checklist

Before publishing any assessment, work through these three clusters.

Alignment

- Every question maps to a specific learning objective from the unit

- All major objectives are represented and weighted appropriately

- No questions included that are not tied to the stated course goals

Validity

- Format matches the skill being assessed (simulation for applied skills, not multiple-choice)

- Piloted with a small group to confirm that questions function as intended

- Reviewed by a second evaluator for alignment, clarity, and unintended complexity

Reliability

- Clear, standardized instructions provided to all test-takers

- Scoring rubric or key created before grading begins

- All ambiguous or double-barreled questions removed or rewritten

- Human graders calibrated on the rubric application before marking

Fairness and Accessibility Playbook

Assessments that are not fair do not produce valid data. They measure access, circumstance, and background , not learning. Here is how to design for equity from the start.

The MAP 2.0 Framework

Map the Goal: Clarify exactly what is being assessed before writing a single question. Removing ambiguity at this stage prevents inadvertently adding complexity that disadvantages some learners.

Assure Accessibility: Audit every question for hidden language barriers. Replace technical terminology with familiar equivalents where the terminology itself is not being tested. Check that visual elements are screen-reader compatible and that formatting works across device types.

Probe for Fairness: Ask whether the question requires knowledge or context that is not part of the learning objective. A word problem set in a ski resort disadvantages students in regions without skiing culture , even if the math content is identical. AI tools can serve as a useful reflective audit here, flagging cultural or regional assumptions in draft items.

Accommodation Framework

| Barrier Type | Problematic Factor | Inclusive Solution |

|---|---|---|

| Linguistic | Complex vocabulary unrelated to content | Use plain, direct language; define technical terms explicitly |

| Cultural | Contextual examples unfamiliar to some groups | Use universal or locally relevant examples |

| Cognitive | Multiple simultaneous demands | Break multi-part questions into focused sub-steps |

| Sensory | Environmental distractions | Offer location-agnostic or digital options with adjustable settings |

| Temporal | Strict time pressure unrelated to skill | Remove time limits or extend time where speed is not the objective |

Additional Accessibility Standards

- Test all platforms for screen reader compatibility before deployment

- Provide offline or low-tech alternatives where connectivity is unreliable

- Never penalize learners for using approved accommodations

- Where standard format cannot accommodate a learner’s needs, offer equivalent alternate evidence tasks that assess the same objective through a different medium

- Document all accommodation decisions consistently so grading fairness is auditable across cohorts

Key Challenges in Educational Assessment, and How to Address Them

Over-Testing

Excessive testing narrows curriculum, raises student anxiety, and crowds out deeper learning. High-stakes exams consume instructional time at the expense of creativity, collaboration, and critical thinking.

Fix it: Replace some high-stakes tests with performance tasks or project-based assessments. Shift toward assessment for learning , providing feedback during the process , rather than only assessment of learning at the end.

Curriculum Misalignment

Students consistently report frustration when assessments feel disconnected from what was taught. This erodes both credibility and motivation simultaneously. It is one of the most direct causes of the “trick question” complaint teachers hear after every test.

Fix it: Build a blueprint that links every item to a specific objective before writing questions. Gather learner feedback after every major assessment to identify misalignment before it repeats.

Time Constraints

Time is the most commonly cited barrier to varied, meaningful assessment. Large-scale tests consume limited instructional time; formative assessment often gets cut first.

Fix it: Use short, targeted assessments, five to ten questions, to gather equivalent insights in a fraction of the time. Leverage automated grading to eliminate manual scoring overhead. Integrate assessment into learning activities so it does not feel like a separate event.

Accessibility Gaps

Not all learners have equal access to the devices or connectivity required for modern digital assessment. Educators also flag usability concerns with some online platforms.

Fix it: Choose platforms that work across device types and internet speeds. Provide offline alternatives where needed. Test all tools with assistive technologies before deployment.

Poor Feedback Quality

Grades without context do not produce learning. Students need to understand what they did well, where the gap is, and specifically what to do differently before the next assessment.

And this is where a harder truth surfaces: feedback quality is only half the problem. The other half is feedback literacy. Research consistently documents that students vary widely in their ability and willingness to engage with feedback. Some do not read it. Some read it and do not know what to do with it. Some have been conditioned by years of grades without explanation to treat written feedback as noise they can safely ignore.

The fix is not less feedback. It is teaching students to use feedback as a tool. That means building in structured time to review and respond to feedback, using rubrics students can reference independently, and making revision a visible and expected part of the learning process rather than an optional add-on.

Adaptive Testing Skepticism

Some students experience adaptive tests as unfair because not everyone receives the same questions. The hidden difficulty adjustment creates confusion and perceived inequity.

Fix it: Explain how adaptive testing works before the assessment begins. Pair adaptive tools with a fixed-question component for high-stakes evaluation. Use adaptive methods primarily for formative, low-stakes assessment until learner trust is established.

Automated Essay Scoring Concerns

Research documents that automated essay scoring (AES) systems can over-reward surface features, length, grammar, and sentence complexity, while being insensitive to argumentation quality and creativity. In some documented cases, they have scored structurally fluent but semantically nonsensical text highly.

Fix it: Use AES for initial scoring and practice feedback only. Require mandatory human review for any high-stakes writing assessment. Calibrate AES tools to your specific rubric criteria before deployment. Train students to interpret automated feedback critically rather than treat it as a final judgment.

The Role of AI and Technology in Modern Assessment

The question is no longer whether to use AI in assessment. It is about how to use it well.

A critical distinction has emerged between generic AI tools, general-purpose systems that can complete tasks but do not build learning, and education-specific AI platforms designed with an intentional pedagogical purpose. ProProfs is one example of the latter: its AI capabilities are built specifically around the assessment workflow, from question generation to cohort-level reporting, rather than adapted from a general-purpose tool.

Research from the OECD Digital Education Outlook (2026) found that generic AI tools often show an “AI advantage” that disappears or reverses in exam conditions when the tool is removed, because students were completing work rather than learning. Education-specific AI, by contrast, shows sustained achievement gains by acting as a tutor and scaffolding assistant rather than a shortcut.

| AI Capability | Institutional Impact | Teacher or Student Benefit |

|---|---|---|

| Automated Question Level Analysis | Generates cohort heatmaps from uploaded assessments | Instantly identifies which topics need reteaching |

| Adaptive Difficulty Adjustment | Pinpoints true skill levels across large cohorts | Keeps every learner in their optimal ZPD |

| AI Question Generation | Rapidly builds question banks from uploaded content | Saves 5 to 10 hours of preparation time weekly |

| Engagement and Sentiment Analysis | Tracks login patterns to flag at-risk learners early | Acts as an early-warning system for disengagement |

| Multi-Language Question Generation | Enables assessment in regional or home languages | Improves equity for EL learners |

| Automated Grading with Rubric Alignment | Reduces review cycles, speeds feedback loop | Faster, more consistent feedback on objective items |

What Technology Cannot Replace

AI and automation are force multipliers for assessment quality, but they require human oversight. Automated scoring should be calibrated to institutional rubrics. Adaptive algorithms should be audited periodically. AI-generated questions should be reviewed by educators before deployment. The expert teacher remains the essential interpreter of assessment data. The technology accelerates their workflow. It does not substitute their judgment.

FREE. All Features. FOREVER!

Try our Forever FREE account with all premium features!

Assessment Terms Glossary

Alignment (Constructive Alignment): The degree to which assessment tasks directly reflect the specific learning outcomes that were taught. Proposed by John Biggs. Objectives, instructional methods, and assessments must all point at the same target. A misaligned assessment measures the wrong things and produces misleading data.

Criterion-referenced assessment: Scored against a fixed standard (e.g., “must score 75% to pass”), not relative to other learners. Best for certification and mastery verification.

Diagnostic assessment: Administered before instruction to establish a baseline of student knowledge and identify gaps that will affect upcoming learning.

Differential Item Functioning (DIF): A statistical technique used to detect whether specific test items perform differently for subgroups (for example, by gender or language background) in ways unrelated to the skill being measured. Used in fairness audits.

Formative assessment: Ongoing, low-stakes assessment during instruction, used to provide immediate feedback and guide instructional adjustments.

Ipsative assessment: Assessment that compares a learner’s current performance to their own previous performance, measuring personal growth rather than group rank.

Norm-referenced assessment: Results interpreted by comparing performance to a larger peer group, typically reported as percentiles.

Reliability: The consistency of an assessment. A reliable tool produces similar results under similar conditions and across different graders.

Rubric: A scoring guide defining quality criteria at each performance level. Rubrics make grading consistent, transparent, and defensible.

Summative assessment: Administered at the end of a learning period to evaluate overall achievement against stated objectives.

Universal Design for Assessment (UDA): An approach that proactively removes barriers from assessment design rather than adding accommodations after the fact. Includes accessible language, flexible formats, and elimination of construct-irrelevant complexity.

Validity: The accuracy of an assessment, the degree to which evidence supports its intended interpretation. Validity is not a property of the test itself, but of the conclusions drawn from scores. If a math test inadvertently measures reading speed, the validity of the math score is compromised.

Zone of Proximal Development (ZPD): The optimal instructional zone where a student can succeed with targeted support but not without it. Effective formative assessment identifies each learner’s ZPD, allowing teachers to provide challenge that accelerates growth without causing frustration.

Best Practices for Creating Effective Educational Assessments

1. Align every item to a learning objective: Map each question to something explicitly taught. Unaligned items generate noise, not signal, and erode learner trust in the process.

2. Use a balanced mix of types: Combine diagnostic, formative, summative, and authentic methods across the learning cycle. No single assessment type captures the full picture.

3. Design for validity first: Choose formats that match the skill being measured. A multiple-choice quiz cannot assess reasoning quality. A single essay cannot assess factual recall efficiently. Match the tool to the target.

4. Build in reliability: Standardize instructions, train graders, and create rubrics before assessment begins, not after the first round of inconsistent scoring.

5. Design for fairness from the start: Apply MAP 2.0 before writing questions. Build UDA principles into your assessment design process, not as post-hoc accommodations.

6. Give specific, actionable feedback: Go beyond scores. Tell learners what worked, what did not, and what the concrete next step is. Then build in time and structure for students to actually use that feedback.

7. Use technology deliberately: Automate what saves time and improves consistency. Maintain human oversight for everything that carries real stakes or requires judgment about individual learners.

8. Review and refine every cycle: After every assessment, analyze which questions worked, which were too easy or hard, and which produced unexpected results. Treat your assessments as living tools, not fixed artifacts.

Watch: How to Review Quiz Reports & Statistics

How to Create a Data-Driven Online Assessment in 6 Steps

Step 1: Define the Objective: Start with a specific, measurable learning outcome (for example, “Students will identify cause-and-effect relationships using textual evidence”). Every subsequent decision flows from this.

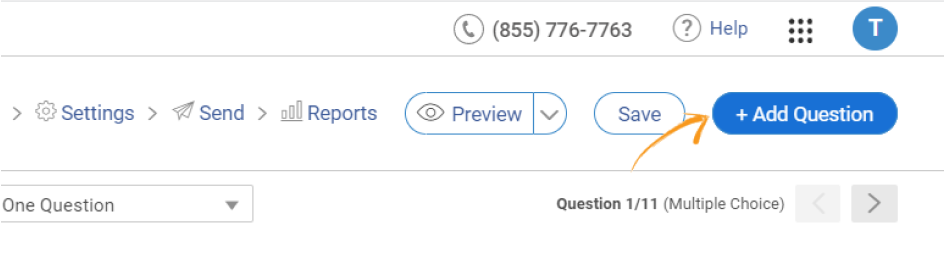

Step 2: Select Your Creation Method: Build from scratch, use a template, or use AI to generate questions from your existing materials, textbook chapters, lecture notes, or uploaded documents. Platforms like ProProfs Quiz Maker support all three approaches and integrate with most LMS environments, which eliminates the manual export-import cycle that wastes more instructional time than most educators realize.

Let ProProfs AI Build a Quiz

Step 3: Diversify Question Types: Include recall-based and application-based items. Use formats that match your objectives: hotspot questions for visual analysis, video response for communication skills, scenario-based items for applied reasoning, and multiple-choice for efficient knowledge recall.

Step 4: Automate Grading and Configure Feedback: Assign point values and write specific feedback for each answer choice, including wrong answers, so students understand not just their score but the reasoning behind it.

Watch: How to Automate Quiz Scoring & Grading

Step 5: Configure Security and Accessibility: Enable time limits, question randomization, and tab-switching prevention for high-stakes assessments. Test the platform on multiple devices and with assistive technologies before deployment.

Watch: How to Customize & Configure Your Quiz Settings

Step 6: Analyze, Act, and Iterate: Review individual and cohort-level reports. Use Question Level Analysis heatmaps to identify which topics need reteaching or enrichment. Group students based on results. Refine questions that performed poorly for the next cycle.

How to Measure Assessment Effectiveness

Creating an assessment is step one. Knowing whether it works is what separates a one-time data collection event from a continuous improvement tool.

Analyze performance patterns, not just averages: Which questions did most learners miss? Which were near-universally correct? These patterns reveal whether your assessment is well-calibrated or systematically missing the mark.

Gather learner feedback: Ask students whether the assessment felt fair, clear, and relevant. Did the questions reflect what was taught? Did the feedback help them understand what to do differently? Their responses reveal validity problems that score data alone will not surface.

Review individual question quality: For each item, is it unambiguous? Is the difficulty appropriate? Does it actually assess the stated objective? Remove or rewrite anything that fails those checks before the next deployment.

Cross-check with other performance indicators: If assessment results do not correlate with class participation, project quality, or instructor observation, investigate the mismatch. Unexplained divergence often signals a validity or reliability problem.

Turn insights into action: Use findings to adjust pacing, redesign underperforming sections, or refine rubric criteria. The most effective educators treat their assessments the same way they treat their lesson plans: always in draft, always improvable.

FREE. All Features. FOREVER!

Try our Forever FREE account with all premium features!

The Point of All of It

Every assessment exists to answer one question: Is the instruction working, and what needs to change?

That only happens if the data is used. If it sits in a gradebook, it is just a number.

The most effective educators treat assessment as continuous. They check where students are, adjust during learning, and use results to guide what comes next.

This is not about doing more. It is about using the right assessment at the right time and acting on it.

The shift is simple: stop treating assessment as judgment and start using it as evidence.

Start with one change this week. Add a 5-question formative check to your next lesson using ProProfs Quiz Maker’s free plan. Review the results the same day. That simple feedback loop is what everything else builds on.

Frequently Asked Questions

What are the 4 types of assessment in education?

The four core types are diagnostic, formative, summative, and standardized. Each serves a different purpose and works at a different point in the learning cycle. Most high-quality programs also incorporate performance-based, authentic, and adaptive assessment to capture the full range of what students know and can do.

What is an example of an educational assessment?

A weekly five-question quiz with automated feedback is formative. A final exam or certification test is summative. A pre-course skills checklist is diagnostic. A student portfolio documenting a semester's work is authentic. A computer-adaptive reading test that adjusts difficulty per question is adaptive. Each serves a different purpose in the educational assessment process.

What are the three main kinds of educational assessment?

Diagnostic (before instruction, to establish a baseline), formative (during instruction, to guide learning in real time), and summative (after instruction, to evaluate overall achievement). Together, they cover every stage of the learning cycle.

What makes an educational assessment fair and reliable?

Fairness means removing construct-irrelevant barriers so all students can demonstrate what they actually know, regardless of language background, cultural context, or access to resources. Reliability means the assessment produces consistent results under similar conditions and across different graders. Both depend on clear objectives, standardized rubrics, and Universal Design for Assessment principles built in from the start.

What is the difference between norm-referenced and criterion-referenced assessment?

Norm-referenced tests compare a student's performance to their peer group (for example, SAT percentiles). Criterion-referenced tests measure performance against a fixed standard of mastery (for example, "score 80% to pass this unit"). Norm-referenced data is best for selection and placement. Criterion-referenced data is best for certifying whether a specific skill has been mastered.

What is the Zone of Proximal Development, and how does assessment support it?

The ZPD is the optimal instructional zone where a student can succeed with targeted support, just beyond independent capability but reachable with guidance. Formative and adaptive assessments identify each learner's ZPD so teachers can deliver instruction at precisely the right level of challenge, maximizing learning without causing frustration.

How can AI improve educational assessment?

Education-specific AI platforms improve assessment through adaptive difficulty adjustment, automated question generation, instant feedback delivery, cohort-level heatmap analytics, and early-warning systems for at-risk learners. The critical qualifier: sustained learning gains require AI with intentional pedagogical design, not generic tools used as shortcuts to avoid doing the work.

We'd love your feedback!

We'd love your feedback! Thanks for your feedback!

Thanks for your feedback!