I’ve sat in on a lot of hiring decisions, and the costly ones rarely happened because someone was careless. They happened because someone was confident with the wrong information. A resume that looked airtight. An interview that went beautifully. A candidate who was sharp in conversation and checked out after ninety days.

After years of building hiring processes and watching them produce inconsistent results, one pattern became impossible to ignore: the tools most teams trust most are the ones research ranks lowest for predicting actual performance.

What follows are recruitment assessment methods that hold up under scrutiny. What they measure, how to run them well, where they fall short, and which roles they suit best.

What Is a Recruitment Assessment?

A recruitment assessment is a structured, validated tool used during hiring to objectively measure a candidate’s job-relevant skills, cognitive ability, personality, and potential. Unlike a standard interview, it produces comparable, scored data across every candidate, giving hiring teams a basis for decisions grounded in observable evidence rather than impression.

The concept that separates useful assessments from noise is predictive validity, a number between 0 and 1.0 measuring how accurately an assessment forecasts real job performance:

| Predictive Validity Score | What It Means |

| Below 0.30 | Too weak to build a process around |

| 0.30 – 0.49 | Moderate; useful when combined with stronger methods |

| 0.50 and above | Strong; worth anchoring your process on |

Most hiring teams are unknowingly relying on methods that fall in the bottom tier.

How Accurate Are Your Hiring Methods?

Most organizations default to the methods that feel most familiar: resumes, open-ended interviews, and years of experience as a proxy for capability. Here’s where those methods actually land, based on Schmidt and Hunter’s meta-analysis in the Journal of Applied Psychology:

| Assessment Method | Predictive Validity | Admin Time | Bias Risk |

| Work Sample Tests | 0.54 | 60–90 min | Low |

| Cognitive Ability Tests | 0.51 | 20–30 min | Medium |

| Structured Interviews | 0.51 | 45–60 min | Low |

| Job Knowledge Tests | 0.48 | ~45 min | Medium |

| Integrity Tests | 0.41 | ~15 min | Low |

| Unstructured Interviews | 0.38 | 45–60 min | High |

| Personality Assessments | 0.31 | ~15 min | Very Low |

| Years of Education | 0.10 | – | High |

Unstructured interviews score 0.38. Years of education scores 0.10, barely above chance. Work samples and cognitive tests used together push combined accuracy to 0.63, a 24% improvement over either method alone.

Pick two or three complementary methods per role, run them consistently for every candidate, and weight scores against pre-defined performance criteria.

What Are the Recruitment Assessment Methods Worth Using?

Each method below covers what it measures, how to run it properly, where it tends to fail, and which roles it suits best.

1. Job Knowledge Tests

Job knowledge tests measure what a candidate already knows about the domain your role operates in: platform proficiency, regulatory knowledge, technical concepts, and process familiarity.

Role in Screening

Job knowledge assessments are one of the best Stage 2 (post-resume screening) filters available. A 20–30 minute online quiz screens out candidates with significant knowledge gaps before any human interview time is spent.

How to Build One Fast

ProProfs Quiz Maker’s AI quiz generator lets you create a scored, ready-to-deploy test in minutes by:

- Typing a prompt (“Generate 20 questions for a mid-level financial analyst role”)

- Uploading a job description

- Uploading training materials or manuals and having the AI generate questions directly from them

Candidates complete the quiz online, scores flow into your pipeline automatically, and randomized question banks mean no two candidates see the test in the same order.

Keeping It Honest

ProProfs Quiz Maker includes built-in proctoring and anti-cheating controls: time limits per question, tab-switch detection, randomized question and answer order, and webcam monitoring. Scores reflect what candidates actually know.

Watch: How to Prevent Cheating in Online Assessments

Watch out For

Job knowledge tests measure what someone knows today, not how well they apply it under pressure. Pair with a work sample or structured interview.

Best for: Technical roles, compliance, customer support, sales, finance, operations.

2. Structured Behavioral Interviews

Standard interviews are unstructured: different questions for different candidates, no consistent scoring, outcomes shaped by first impressions. Structured behavioral interviews fix this by replacing open-ended conversation with a repeatable, evidence-based process built on the STAR framework:

| Stage | What You’re Asking |

| Situation | What was the context? |

| Task | What were they responsible for? |

| Action | What did they specifically do? |

| Result | What was the measurable outcome? |

How to Run It Well

- Identify the 3–5 competencies the role genuinely depends on

- Write 2–3 STAR questions per competency

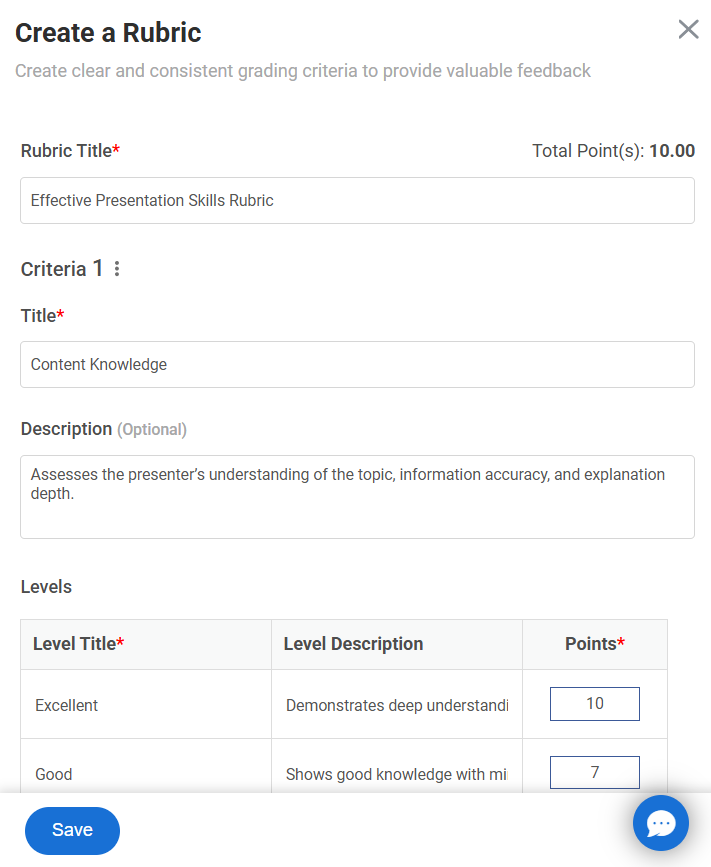

- Build a scoring rubric before any interviews begin

- Have every interviewer score independently before any group discussion

That last point matters more than most teams realize. When individual scores are locked in before discussion, you avoid the most common panel interview failure: the most senior person in the room decides the outcome before anyone else speaks.

What a rubric actually looks like; here’s a working example for the competency “coaching and developing others” on a Sales Manager role:

| Score | Response Characteristics |

| 1 – Weak | Vague or generic (“I try to support my team”). No specific person, situation, or outcome named. |

| 3 -Average | Names a situation and describes some actions taken, but outcome is unclear or the candidate’s role is ambiguous. |

| 5 – Strong | Names a specific underperformer, describes exactly what they changed and how, ties it to a measurable result (quota attainment, retention, promotion). |

Sample question for this competency: “Tell me about a time you had to turn around an underperforming team member. What specifically did you do, and what changed as a result?”

Watch for candidates who use “we” when describing their own actions; probe for what they specifically did. Vague outcomes (“things improved”) are a signal to push for numbers.

Sample STAR questions by competency:

| Competency | Question |

| Conflict resolution | “Tell me about a time a colleague disagreed with your approach in front of others. What did you do?” |

| Prioritization | “Describe a time you had more on your plate than you could realistically deliver. How did you decide what got your attention?” |

| Customer recovery | “Walk me through a time a client was ready to leave. What happened and what did you do?” |

| Analytical thinking | “Tell me about a decision you made with incomplete data. How did you approach it?” |

Watch out For

Behavioral interviews depend on candidates having relevant past experience. For early-career roles or career changers, pair with a situational judgment or work sample component.

Best for: Management, sales, customer success, operations.

Pro Tip: For teams hiring at scale, video interview quizzes let you run the same structured format asynchronously, with every candidate answering the same questions on camera and reviewers scoring independently before any discussion.

Watch: How to Create a Video Interview Quiz

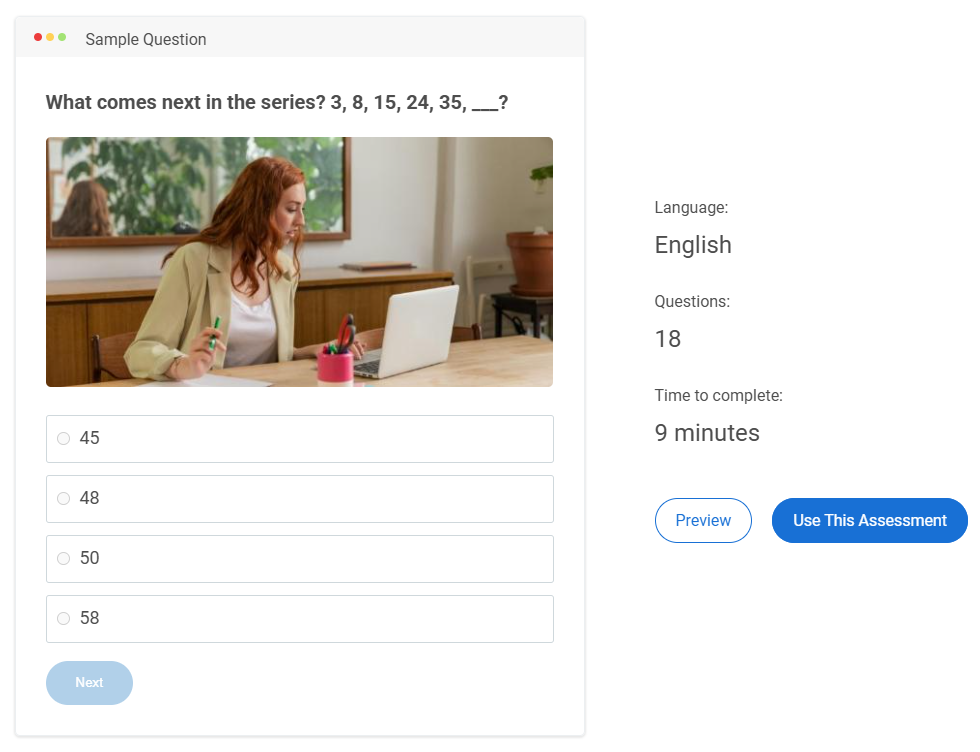

3. Cognitive Ability Tests

Cognitive ability tests measure how quickly and accurately someone processes information, identifies patterns, and works through problems. They’re not just IQ tests. They’re job-relevant reasoning assessments tied directly to what the role requires.

On their own, they reach a predictive validity of 0.51. Combined with a structured interview or work sample, that rises to 0.63, the highest accuracy available from a two-method battery.

How to Run It Well

Match the test type to what the role actually demands:

| Role Type | Test Focus |

| Financial analysts | Numerical reasoning |

| Content strategists | Verbal reasoning |

| Software engineers | Logical sequencing, spatial reasoning |

| Operations managers | Abstract reasoning, problem-solving |

Set a minimum threshold score before anyone advances to interviews. Here’s how to calculate a defensible threshold without over-filtering:

- Pull the average score from your last 10 successful hires in the role

- Subtract one standard deviation, that becomes your floor

- Review quarterly: if strong performers scored near the floor, leave it; if weak performers scored above it, raise it

Tools like Criteria’s CCAT, SHL, and TestGorilla provide normed benchmarks so you’re comparing against population baselines, not just your own pipeline.

Watch out For

Cognitive tests can show a higher adverse impact across certain demographic groups when used as a sole filter. Pair them with low-bias methods like work samples or structured interviews.

Best for: Software engineering, data science, finance, operations, and product management.

4. Work Sample Tests

Work sample tests ask candidates to do the actual job before they have it, which is why they carry the highest single-method predictive validity at 0.54.

Common formats by role:

| Role | Work Sample Format |

| Developer | Live coding challenge |

| Consultant | Structured case analysis |

| Content strategist | Write from a real brief |

| Financial analyst | Dataset with a buried anomaly to find and explain |

| Designer | Brand brief with a rationale requirement |

How to Run It Well

Building the brief:

- Use a real scenario your team has faced, anonymized if needed

- Specify the deliverable format exactly (e.g., “a 300-word recommendation with supporting data, not a slide deck”)

- State the time limit clearly; 60-90 minutes is the standard

Scoring before you look; write your rubric before reviewing submissions. Here’s a working example for a content strategist brief:

| Criterion | 1 – Weak | 3 – Average | 5 – Strong |

| Brief alignment | Ignores key constraints | Addresses main ask, misses nuance | Every choice connects back to brief |

| Argument clarity | Hard to follow | Coherent but generic | Clear, specific, easy to act on |

| Audience awareness | Generic tone | Some adaptation | Distinctly written for stated audience |

| Craft | Multiple errors | Clean but flat | Compelling and polished |

On assessment security: Generative AI tools are part of today’s cheating conversation. Design your way around it.

- Randomized question banks: no two candidates receive the same test

- Open-ended scenario tasks: require original reasoning, a prompt can’t replicate

- Adaptive testing: difficulty adjusts in real time

- Platform-level controls: Assessment platforms may include capabilities such as tab-switch detection, screen monitoring, and plagiarism detection.

Watch out For

Work samples take real time from both candidates and evaluators. For high-volume pipelines, use automated scoring wherever possible and save human review for finalists.

Best for: Development, design, content creation, financial modeling, consulting.

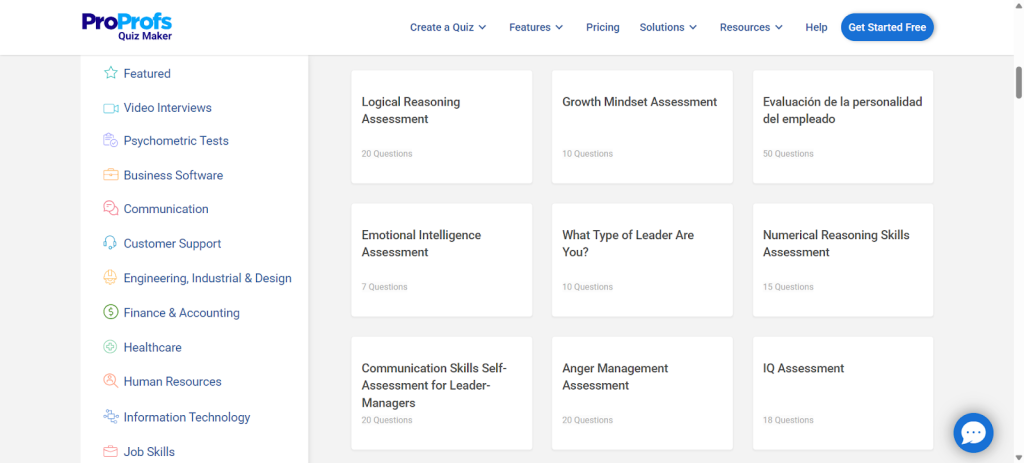

5. Psychometric and Personality Assessments

Psychometric and personality assessments tell you how someone is wired; their behavioral tendencies, work style, and the conditions where they naturally perform well. Used correctly, they make your interviews sharper, not redundant.

Explore Psychometric Tests for Recruitment

The most research-backed trait to measure is conscientiousness; the single most consistent personality predictor of job performance across all occupations.

Other traits worth measuring, depending on the role:

| Trait | Most Relevant For |

| Conscientiousness | All roles; universal predictor |

| Extraversion | Sales, client-facing, team leadership |

| Agreeableness | Collaborative, high-dependency environments |

| Emotional stability | High-pressure, ambiguous, or conflict-heavy roles |

How to Run It Well

Use scores as interview preparation, not as a gatekeeping filter. Here’s what that looks like in practice:

If conscientiousness is low:

Ask: “Walk me through how you manage your workload when three things are due at the same time. What does your system actually look like?”

Listen for: specific tools, named habits, evidence of follow-through under pressure. Vague answers (“I prioritize”) aren’t enough.

If emotional stability is low:

Ask: “Tell me about a time things at work went sideways in a way you didn’t see coming. How did you handle the first 48 hours?”

Listen for: self-awareness, composure in the telling, what they actually did vs. what they felt.

If agreeableness is low:

Ask: “Tell me about a time you pushed back on a decision your manager had already made. How did you approach it and what happened?”

Listen for: directness without aggression, ability to disagree and still execute.

Watch out For

Personality scores can shift based on a candidate’s stress level or mindset on the day of testing.

Never use a personality score as a sole disqualifying criterion.

Best for: Leadership, management, sales, customer-facing roles.

6. Emotional Intelligence (EI) Assessments

EI is the ability to understand, manage, and respond to emotions; your own and others’. For people-facing roles, it’s a primary performance driver, not a secondary consideration. Research from TalentSmart found that EI accounts for 58% of performance variance across all job types.

EI assessments measure four dimensions:

| Dimension | What It Reveals |

| Self-awareness | Do they understand how they come across to others? |

| Self-regulation | Can they stay composed when things go sideways? |

| Social awareness | Do they read team dynamics and situations accurately? |

| Relationship management | Can they navigate conflict without leaving damage behind? |

How to Run It Well

Use validated tools like the EQ-i 2.0 or MSCEIT for objective scoring. Pair scores with situational judgment questions are scored against behavioral anchors.

Sample scenarios by dimension:

| Dimension | Scenario to Present |

| Self-regulation | “You’re presenting to a client and they dismiss your recommendation in front of your team. What do you do in the moment?” |

| Social awareness | “You notice a usually talkative team member has gone quiet in meetings for two weeks. Nobody else seems to have noticed. What, if anything, do you do?” |

| Relationship management | “You overhear a colleague venting about a decision you made. You weren’t meant to hear it. What do you do?” |

What strong vs. weak responses look like:

| Response Quality | Characteristics |

| Strong | Direct, non-defensive, addresses the issue without escalating it |

| Weak | Avoidance, passive retaliation, over-escalation, or performative calm that doesn’t hold under follow-up |

Watch out For

EI tests are vulnerable to socially desirable responding; candidates answering the way they think you want. Pairing scores with behavioral questions about specific past situations significantly reduces this.

Best for: Healthcare, customer support, HR, and team leadership.

7. Call Handling Simulations

A 15-minute simulation gives you more signal than multiple interview rounds for customer-facing roles. You’re not asking how someone would handle a difficult caller. You’re watching them do it.

How to Run It Well

Building your scenario library:

Start by pulling your team’s five most common and five most challenging call types. Write a caller persona for each one with:

- A specific complaint or request (not a vague “they’re upset”)

- An emotional state (frustrated but reasonable/ hostile / confused / in genuine distress)

- A trigger that escalates the call if the candidate handles it poorly

Example scenario:

Caller persona: A customer who received the wrong order for the second time. They were calm the first time. They are not calm now. They’ve already been on hold for 12 minutes.

Escalation trigger: If the candidate leads with policy (“our standard process is…”) before acknowledging the frustration, the caller gets louder.

Scoring rubric:

| Dimension | What to Look For |

| Verbal clarity | Easy to follow under pressure? |

| Empathy | Genuine acknowledgment, or scripted sympathy? |

| Information accuracy | Correct answers, not just confident ones? |

| De-escalation | Lowers the temperature without dismissing the issue? |

| Composure | Stays steady when the caller pushes back? |

Have candidates draw their scenario randomly at the start. Test your scenarios with current team members first; if your best rep struggles with the script, rewrite it.

Watch out For

Unconvincing caller personas make the exercise feel artificial and depress candidate performance. The persona is as important as the scenario itself.

Best for: Customer support, inside sales, healthcare intake, retail.

8. Data Analysis Exercises

A data analysis exercise tests critical thinking, tool proficiency, and, critically, the ability to communicate findings to someone who doesn’t live in the data. That last part is where most candidates fall short, and it’s usually the most important part of the job.

How to Run It Well

Use data that mirrors what the candidate will actually work with:

| Role | Dataset to Use |

| Digital marketing analyst | GA4 export with underperforming campaigns buried in it |

| Financial analyst | P&L statement with a margin anomaly that needs explaining |

| Business intelligence | Multi-table dataset requiring joins and synthesis |

Structure the brief like this:

- Here is the dataset, in the format your team actually uses

- Here are the tools available: the same ones your team uses, no unfamiliar software

- You have 60 minutes

- Present your findings to a non-technical stakeholder in five minutes

That presentation requirement is where the exercise earns its keep. Score it separately from the analysis itself:

| Criterion | Strong | Weak |

| Insight quality | Surfaces non-obvious finding with clear implication | States obvious trends without interpretation |

| Stakeholder framing | Explains so what before what | Leads with methodology |

| Recommendation clarity | Specific, actionable next step | Vague or qualified to the point of uselessness |

| Data accuracy | Numbers are correct and correctly interpreted | Errors or misattributions in the analysis |

Watch out For

Provide the same tools and a brief orientation to the dataset before the clock starts. Candidates who’ve never used Tableau shouldn’t be penalized for tool unfamiliarity if the role doesn’t require it from day one.

Best for: Finance, data science, business intelligence, SEO/PPC, operations, product management.

9. Creative Design Assessments

A portfolio shows past work at its best. A design brief shows current judgment under real constraints, and judgment is what you’re actually hiring for.

How to Run It Well

Write a brief that forces real decisions. Vague briefs produce vague work. A useful brief includes:

- A specific industry with a named competitive context (“a challenger brand in the crowded direct-to-consumer supplement space”)

- A defined audience with actual demographics (“women 35–50 who distrust traditional healthcare”)

- A clear tone constraint (“authoritative but not clinical, warm but not soft”)

- A format requirement (“logo, one brand color, one typeface, with written rationale for each”)

That rationale requirement is the most important part. Two candidates can produce visually similar work. One can explain the strategic thinking behind every choice. That’s the distinction that matters in any client-facing or cross-functional role.

Scoring rubric:

| Criterion | What You’re Assessing |

| Visual coherence | Does it hold together as a system, not just individual elements? |

| Alignment to brief | Does every choice connect back to the stated audience and tone? |

| Design craft | Is the execution technically sound? |

| Clarity of rationale | Can they articulate why, not just what? |

Share this rubric with candidates before they start. Additional options depending on seniority:

- A branded pitch deck with a live presentation component

- Social media graphics for a specific campaign brief

- A full landing page mock-up with copy direction included

Watch out For

Keep the scope under two hours and say so upfront. If your brief consistently takes longer than that, the problem is the brief.

Best for: Graphic design, brand management, creative direction, content design, and marketing agencies.

How Do You Match Assessment Methods to the Right Role?

Not every role needs every method. Over-assessing drives candidate drop-off and signals a poorly run process to the people you most want to attract. Use this as a starting framework and adjust based on what your specific role actually demands.

| Role Type | Recommended Combination | Primary Signal |

| Technical (engineers, data scientists) | Cognitive test + work sample + structured interview | Can-do capability |

| Management | Behavioral interview + psychometric + leadership case study | Judgment and team dynamics |

| Customer-facing | EI assessment + call simulation + behavioral interview | Interpersonal performance |

| Creative | Portfolio review + design brief + structured interview | Strategic thinking and craft |

| Senior / Executive | Work sample + multi-stakeholder interviews + psychometrics + reference checks | Full-profile signal |

Two to three methods per role is the practical sweet spot.

How Do You Build an Assessment Process That Holds Up at Scale?

Choosing the right methods is half the work. Running them consistently across dozens or hundreds of candidates is where most programs fall apart.

Step 1: Start With a Job Analysis

Before selecting any assessment, define exactly what the role demands. A useful job analysis answers three questions:

- What does strong performance look like in the first 90 days?

- What are the 3–5 competencies that most predict success in this specific role?

- What have past failures in this role had in common?

Sixty minutes with the hiring manager and one or two top performers in the role is usually enough to answer all three.

Step 2: Build a Multi-Hurdle Funnel

| Stage | Method | Purpose |

| Stage 1 | Automated resume screen | Filter for minimum qualifications |

| Stage 2 | 15–20 min skills quiz or cognitive screen | Filter for cognitive fit before human time is spent |

| Stage 3 | Structured interview or work sample | Properly evaluate finalists |

Step 3: Write Scoring Rubrics Before Evaluations Begin

Write rubrics before you see any submissions. This removes the most common source of evaluator drift: calibrating expectations to whoever you saw first.

A rubric doesn’t need to be complex. Three anchor points per criterion, weak, average, and strong, with specific behavioral descriptions, are enough to align a panel of three evaluators.

Step 4: Integrate Assessments With Your ATS

The assessment invite should trigger automatically when an application arrives. The score should flow directly into the candidate’s profile in your Applicant Tracking System (ATS), not into an email thread someone has to manually track.

Step 5: Close the Loop With Post-Hire Analytics

At 90 days, pull two lists: your top assessment scorers and your lowest. Compare them against manager performance ratings. Then ask:

- If top scorers are underperforming: the assessment is measuring something real but not job-relevant, revisit the job analysis

- If low scorers are outperforming, your threshold is set too high, or the wrong method is filtering them out

- If there’s no correlation either way, the assessment isn’t adding signal; replace it

Run this quarterly. The process compounds.

Watch: How to Review Quiz Reports & Statistics

What Are the Most Common Mistakes That Undermine Assessment Programs?

Even teams using the right methods consistently trip over the same three problems.

1. Using Static, One-Off Tests

The moment a candidate shares your test in an online forum, every subsequent candidate has an advantage. Build randomized question banks so no two candidates receive the same test.

2. Ignoring the Candidate Experience

Long or poorly designed assessment processes can reduce completion rates and discourage candidates from finishing. The candidates who drop out of a poorly designed process often have the most options.

The candidates who drop out of a poorly designed process often have the most options.

3. Treating a Single Score as the Whole Decision

No single assessment can fully predict job performance. Use assessment results to support the hiring decision, not to make it on their own.

Build a Hiring Process That Gets Smarter Over Time

Most hiring mistakes aren’t made carelessly; they’re made with incomplete information and methods that were never designed to predict performance in the first place.

The framework in this guide won’t eliminate every bad hire. What it does is systematically raise the floor. When you score before discussing, write rubrics before evaluating, and track what assessment results actually predicted, the process gets more accurate with every cohort you run it on.

If you’re building this from scratch, ProProfs Quiz Maker is worth a look, with a question library of 100,000+, built-in proctoring, randomization, and ATS integrations that remove the operational friction of running structured assessments at scale.

Frequently Asked Questions

How long should a pre-employment assessment take?

For pre-screening, under 20 minutes. For role-specific skills tests, 60–90 minutes is reasonable with advance notice. For senior hires, up to two hours is defensible — but communicate the time commitment upfront and make the relevance obvious.

Are cognitive tests biased against certain demographic groups?

They can show an adverse impact when used in isolation. The fix is to pair them with low-bias methods, work samples, structured interviews, and personality assessments. Used together, you improve both predictive accuracy and fairness.

Can candidates cheat using AI tools like ChatGPT?

Yes, on traditional, predictable tests. The answer is better design: randomized question banks, open-ended scenario tasks, adaptive testing, and AI plagiarism detection built into your assessment platform.

What is the difference between culture fit and culture add?

Culture fit screens for similarity to the existing team, which amplifies bias and reduces the range of perspectives that drive better decisions. Culture add looks for candidates who share your core values but bring a different background or point of view. Well-designed behavioral interviews are the right tool for surfacing that.

How do I know if my assessments are actually working?

Correlate scores with 90-day and 6-month performance ratings. If top scorers are underperforming, the assessment is measuring the wrong thing. If low scorers are outperforming, you're filtering out people you should be advancing. Run this review quarterly.

What is the best recruitment assessment tool for high-volume hiring?

For teams hiring at scale, the priorities shift to consistency, automation, and integration. Platforms with question randomization, ATS integration, automated scoring, and proctoring controls — like ProProfs Quiz Maker, Vervoe, and TestGorilla — are built for this.

How do recruitment assessments reduce hiring bias?

Structured assessments reduce bias by replacing first-impression judgments with criteria-based scoring. When every candidate answers the same questions, is scored against the same rubric, and is evaluated independently before group discussion, the conditions under which bias operates most freely are removed.

We'd love your feedback!

We'd love your feedback! Thanks for your feedback!

Thanks for your feedback!